Subject code AD3501 deals with semester V of B.Tech Artificial Intelligence and Data Science regarding affiliated institutions of Anna University Regulation 2021 Syllabus. In this article, you can gather certain information relevant to the Deep Learning. We added the information by expertise.

We included the proper textbooks and references to assist in some way in your preparation. It will enhance your preparation and strategies to compete with the appropriate spirit with others in the examination. If you see, you can find the detailed syllabus of this subject unit-wise without leaving any topics from the unit. In this article AD3501 – Deep Learning Syllabus, You can simply read the following syllabus. Hope you prepare well for the examinations. I hope this information is useful. Don’t forget to share with your friends.

If you want to know more about the syllabus of B.Tech Artificial Intelligence And Data Science connected to an affiliated institution’s four-year undergraduate degree program. We provide you with a detailed Year-wise, semester-wise, and Subject-wise syllabus in the following link B.Tech Artificial Intelligence And Data Science Syllabus Anna University, Regulation 2021.

Aim of Objectives:

- To understand and need and principles of deep neural networks

- To understand CNN and RNN architectures of deep neural networks

- To comprehend advanced deep learning models

- To learn the evaluation metrics for deep learning models

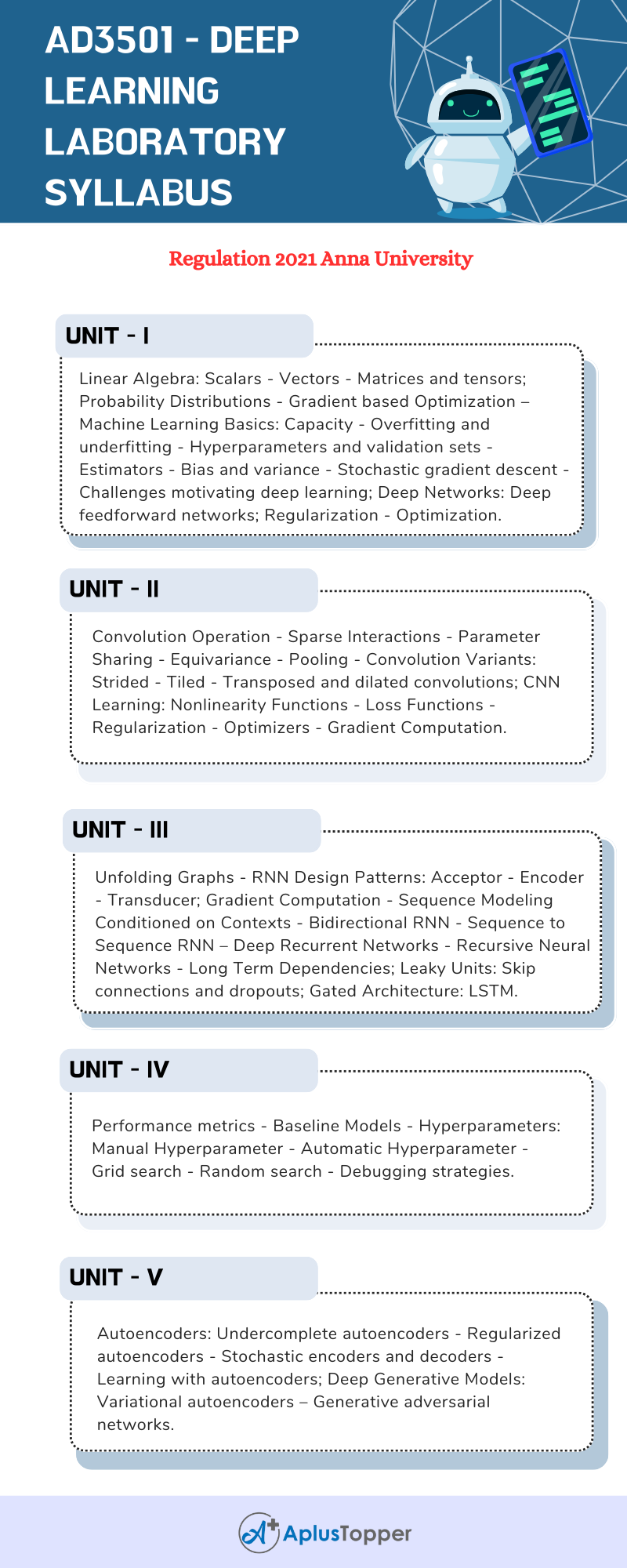

AD3501 – Deep Learning Laboratory Syllabus

Unit I: Deep Networks Basics

Linear Algebra: Scalars – Vectors – Matrices and tensors; Probability Distributions – Gradient based Optimization – Machine Learning Basics: Capacity – Overfitting and underfitting – Hyperparameters and validation sets – Estimators – Bias and variance – Stochastic gradient descent – Challenges motivating deep learning; Deep Networks: Deep feedforward networks; Regularization – Optimization.

Unit II: Convolutional Neural Networks

Convolution Operation – Sparse Interactions – Parameter Sharing – Equivariance – Pooling – Convolution Variants: Strided – Tiled – Transposed and dilated convolutions; CNN Learning: Nonlinearity Functions – Loss Functions – Regularization – Optimizers – Gradient Computation.

Unit III: Recurrent Neural Networks

Unfolding Graphs – RNN Design Patterns: Acceptor – Encoder – Transducer; Gradient Computation – Sequence Modeling Conditioned on Contexts – Bidirectional RNN – Sequence to Sequence RNN – Deep Recurrent Networks – Recursive Neural Networks – Long Term Dependencies; Leaky Units: Skip connections and dropouts; Gated Architecture: LSTM.

Unit IV: Model Evaluation

Performance metrics – Baseline Models – Hyperparameters: Manual Hyperparameter – Automatic Hyperparameter – Grid search – Random search – Debugging strategies.

Unit V: Autoencoders And Generative Models

Autoencoders: Undercomplete autoencoders – Regularized autoencoders – Stochastic encoders and decoders – Learning with autoencoders; Deep Generative Models: Variational autoencoders – Generative adversarial networks.

Text Book:

- Ian Goodfellow, Yoshua Bengio, Aaron Courville, “Deep Learning”, MIT Press, 2016.

- Andrew Glassner, “Deep Learning: A Visual Approach”, No Starch Press, 2021.

References:

- Salman Khan, Hossein Rahmani, Syed Afaq Ali Shah, Mohammed Bennamoun, “A Guide to Convolutional Neural Networks for Computer Vision”, Synthesis Lectures on Computer Vision, Morgan & Claypool Publishers, 2018.

- Yoav Goldberg, “Neural Network Methods for Natural Language Processing”, Synthesis Lectures on Human Language Technologies, Morgan & Claypool Publishers, 2017.

- Francois Chollet, “Deep Learning with Python”, Manning Publications Co, 2018.

- Charu C. Aggarwal, “Neural Networks and Deep Learning: A Textbook”, Springer International Publishing, 2018.

- Josh Patterson, Adam Gibson, “Deep Learning: A Practitioner’s Approach”, O’Reilly Media, 2017.

Related Posts On Semester – V:

You May Also Visit: