Kerala Plus Two Computer Science Notes Chapter 11 Advances in Computing

Distributed Computing Paradigms

Very large program is sub divided into smaller ones and distributed these small ones to free computers over network. Hence ensures the use of computers effectively. The term paradigm means a pattern ora model in the study of any subject of complexity. Advanced computing paradigms helps to process information for many sectors of our society. It includes parallel computing, cluster computing, grid computing, cloud computing, etc.

Distributed Computing

Definition: Distributed computing is a method of computing in which large problems can be divided into smaller ones and this smaller ones are distributed among several computers. The solution for the smaller ones are computed separately and simultaneously. Finally the results are assembled to get the desired overall solution.

Advantages

- Economical: Reduces the computing cost hence it is economical

- Speed: The work load of the entire system is less hence the speed is high.

- Reliability : When one computer in the network fails the entire work will not be blocked, i.e. the other computers will do the work properly.

- Scalability: We can add computers according to the work load.

Disadvantages

- Complexities: The proper division ofthe problems and reassembling ofthe result is a major complex task

- Sectary Security measurements to be taken to keep track of the sent data packets otherwise it can be used for illegal purpose.

- Network reliance: Some occasions in case of network failure, the entire system may become unstable.

Parallel computing

In Serial computation the problem is divided into series of instructions and these instructions are executed sequentially, i.e. one after another. Here, only one instruction is executed at a time. But in mp: ng more than one instruction is execute simultaneously at a time.

| Serial computing | Parallel computing |

| A single processor is used | Multiple processors are used with shared memory |

| A problem is divided into a series of instructions | A problem is divided into smaller ones that can be solved simultaneously |

| Instructions executed sequentially | Instructions executed simultaneously |

| One instruction is executed on a single processor at any moment | More than one instruction is executed on multiple processors at any moment of time. |

AADITYA is the fastest super computer used by Indian Institute of Tropical meteorology.

Grid computing

It is a system in which millions of computers, smart phones, satellites, telescopes, cameras, sensors etc. are connected to each other as a cyber world in which computational power(resources, services, data) is readily available like electric power. Any information at any time at any place can be made available in our finger tips. This is used in disaster management, weather forecasting, market forecasting, bio information etc.

Cluster computing

Cluster means groups. Here a group of Internet-connected computers, storage devices, etc are linked together and work like a single computer. It provides computational power through parallel processing. Its cost is less and used for scientific applications.

Advantages

- Price-performance ratio: The performance is high and the cost is less.

- Availability: If one group of system fails the other group will do the work.

- Scalability : Computers can be easily added according to the work load increases.

Disadvantages

- programmability issues: Different computers uses different software and hardware hence the issues.

- problem in finding fault: Fault detection is very difficult.

Cloud computing

It is an emerging computing technology. Here with the use of Internet arid central remote servers to maintain data and applications. Example for this is Email seivice, Office Software(word processor, spread sheets, presentations, data base etc), graphic software etc. The information is placed in a central remote server just like clouds in the sky hence the name cloud computing.

Cloud service models (3 major services)

- Software as a Service(SaaS)

- Platform as a Service(PaaS)

- Infrastructure as a Service (laaS)

Artificial Intelligence

The first definition of Artificial Intelligence was established by Alan Turing. Turing strongly believed that a well designed computer could do every thing that a brain could, his statements are still visionary. “Al means the capability of a computer that can behave just like human beings can behave when both faced a common situation.”

Al was first coined by John MacCarthy in 1956. With the help of Al computer can solve the tasks such as playing chess, proving mathematical theorems, natural language processing, medical diagnosis etc.

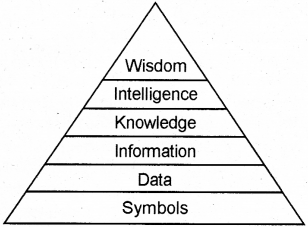

Data is termed as a collection of mere symbols. While processing data we get information and knowledge is the organized information. It can be a piece of information that helps in decision making. The ability to draw useful inferences from the available knowledge is generally referred as intelligence. Wisdom is the maturity of mind that directs its intelligence to achieve desirable goals.

Turing Test approach to Al: The Turing test is a test of a machine’s ability to exhibit intelligent behaviour equivalent to, or indistinguishable from, that of a human. The test involves a human judge engages in natural language conversations with a human and a machine designed to generate performance indistinguishable from that of a human being. All participants are separated from one another. If the judge cannot reliably tell the machine from the human, the machine is said to have passed the test. The test does not check the ability to give the correct answer to questions; it checks how closely the answer resembles typical human answers. Turing predicted that by 2000 computer would pass the test. The computer would need to possess the following capabilities

- Natural Language Processing (NLP): It enables the computer to communicate successfully in English. Speech recognition, synthesis, machine translation, hand written character recognition etc. are some of the practical applications.

- Knowledge representation : To incorporate human knowledge

- Automated reasoning : To use the knowledge to answerquestions and infer new knowledge,

- Machine learning : To adapt to new circumstances and to detect and assume patterns.

- Computer vision : The capability to observe objects.

- Robotic activities : To make the robot a little smarter, intelligence must be incorporated.

Computational Intelligence

Earlier machines were used to do our work. But with the invention of digital computers, we use computers to solve time-consuming and complex tasks faced in real life. The main goal is to make the interaction of machines is more and man less. The study of man and machine interactions and control methods are known as Cybernetics.

Nowadays Artificial Intelligence is the study of how to make computers do things which people are doing better. Al is a combination of computer science, biology, medicine, robotics, etc.

Computational Intelligence paradigms

Computational Intelligence is the ability to make a computer to face and solve the real life problems just like a intelligent man do it. It includes Artificial Neural Networks(ANN), Evolutionary Computation (EC), Swarm Intelligence(SI) and Fuzzy Systems (FS).

A) Artificial Neural Networks(ANN): The brain is a complex, nonlinear and parallel computer with ability to perform tasks such as recognise pattern, perception and motor control. ANN is the method of simulate biological neural systems to learn, memorise and generalize like human beings. A human brain cortex consists of 10-500 billion neurons with 60 trillion synapses(a synapse is a structure that permits a neuron to pass an electrical)

B) Evolutionary Computation(EC) : It is the simulation of the natural evolution, i.e. survival of the fittest. In the surrounding we can see that the stronger must win and others will lose. EC applied for data mining, fault diagnosis, classification, scheduling etc.

C) Swarm Intelligence(SI): Swarm Intelligence is the study of behaviour of colonies or groups of social animals, birds, insects, ants etc. How they communicate and create and manage their own colonies beautifully.

D) Fuzzy Systems : Human beings use common sense while facing a problem, just like human beings fuzzy systems can also use common sense and behave like a human beings. Fuzzy systems is used to control gear transmission and raking systems, control lifts, home appliances, controlling traffic signals etc.

Application of Computational Intelligence

A) Biometrics : Biometrics refers to the unique characteristics of a human being to recognize an individual such as finger prints, face recognition, iris, retina etc. Biometrics are used to record attendance Eg. In banks employee login is restricted by using finger print reader.

B) Robotics : It is a branch of scientific study associated with the design, manufacturing and control the movements of the robots. Roboticss are used in all the areas. Some of them are discussed below.

C) Computer vision : The worid is changed from 2 dimensional images to 3 dimensional. 3D TVs are available in the market. Multiple cameras are used to capture the images and merge them to form 3D pictures. 3D scanners are used in the Medical field to diagnose the diseases

D) Natural Language Processing : It deals with how computers are communicate just like a human being communicate naturally. Natural languages are languages spoken by the people.’ To achieve this ability to communicate like a human being is a laborious task. NLP is further be classified into two, Natural Language Understanding (NLU) and Natural Language Generation (NLG)

- NLU- The ability to understand the languages like English, Malayalam, etc,

- NLG- It is deal with creation of output, i.e. generate words and giving reply.

E) Automatic Speech Recognition(ASR): To login some computers, laptops .tabs, smart phones etc, and to open some gates, doors etc you have say the password orally. The computer recognizes the speech and opens the device to them. Such devices are working based upon NLP technology and this system is called Automatic Speech Recognition(ASR) system. To implement this system the requirements are a mica or a telephone and convert the oral instruction into written text. Examples are Apple iOS, Google etc.

F) Optical Character Recognition(OCR) and Handwritten Character Recognition Systems.(HCR) : It is used to read text from a paper as an image and translate this image into a form that computer can manipulate. For example, if we want to enter the text contents of a book by typing using a key board will take more time. Instead of this by using OCR and HCR we can easily do this. OCR is expensive. HCR system reads handwritten texts and convert it into computer-readable form

G) Bioinformatics; It is a computer technology to the management of biological information. By the help of a computer analyse the finger prints, DNA, iris, retina etc and identifying the concerned person.

Three goals of bioinformatics.

- Organise : Huge amount of data organized to access information easily and to add new information when it is produced

- Develop tools : Develop new tools to analyse data efficiently.

- Analysis: With the help of these tools analyse the data and produce results.

H) Geographical Information System :

A geosynchronous satellite moves at the same Revolutions per minute(RPM) as that of the earth in the same direction. Thus both the earth and the satellite complete one revolution exactly in the same time and hence the relative position of the ground station with respect to the satellite never changes. Geographic Information System(GIS) technology is developed from the digital cartography and Computer-Aided Design(CAD) data base management system. GiSasthe name implies capturing, storing for future reference, checking, and displaying data related to various positions on earth’s surface. GIS can be applied in many areas such as soil mapping, agricultural mapping, forest mapping, e-Governance, etc.

GIS is used in development planning like strategic rural and urban planning, infrastructure planning, precision agriculture planning, etc.