What are Addition and Multiplication Theorems on Probability?

Addition and Multiplication Theorem of Probability

State and prove addition and multiplication theorem of probability with examples

Equation Of Addition and Multiplication Theorem

Notations :

- P(A + B) or P(A∪B) = Probability of happening of A or B

= Probability of happening of the events A or B or both

= Probability of occurrence of at least one event A or B - P(AB) or P(A∩B) = Probability of happening of events A and B together.

(1) When events are not mutually exclusive:

If A and B are two events which are not mutually exclusive, then

P(A∪B) = P(A) + P(B) – P(A∩B)

or P(A + B) = P(A) + P(B) – P(AB)

For any three events A, B, C

P(A∪B∪C) = P(A) + P(B) + P(C) – P(A∩B) – P(B∩C) – P(C∩A) + P(A∩B∩C)

or P(A + B + C) = P(A) + P(B) + P(C) – P(AB) – P(BC) – P(CA) + P(ABC)

(2) When events are mutually exclusive:

If A and B are mutually exclusive events, then

n(A∩B) = 0 ⇒ P(A∩B) = 0

∴ P(A∪B) = P(A) + P(B).

For any three events A, B, C which are mutually exclusive,

P(A∩B) = P(B∩C) = P(C∩A) = P(A∩B∩C) = 0

∴ P(A∪B∪C) = P(A) + P(B) + P(C).

The probability of happening of any one of several mutually exclusive events is equal to the sum of their probabilities, i.e. if A1, A2 ……… An are mutually exclusive events, then

P(A1 + A2 + … + An) = P(A1) + P(A2) + …… + P(An)

i.e. P(Σ Ai) = Σ P(Ai).

(3) When events are independent :

If A and B are independent events, then P(A∩B) = P(A).P(B)

∴ P(A∪B) = P(A) + P(B) – P(A).P(B)

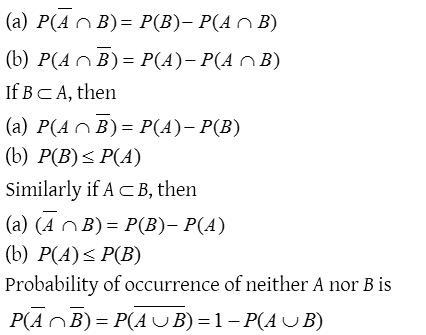

(4) Some other theorems

- Let A and B be two events associated with a random experiment, then

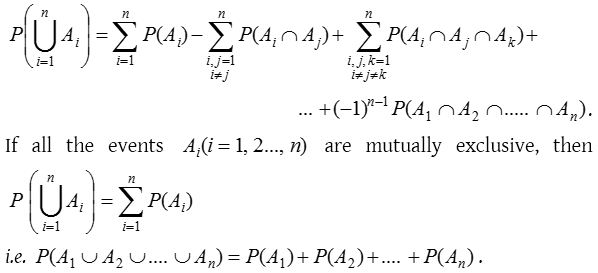

- Generalization of the addition theorem :

If A1, A2 ……… An are n events associated with a random experiment, then

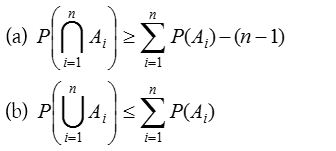

- Booley’s inequality : If A1, A2 ……… An are n events associated with a random experiment, then

These results can be easily established by using the Principle of mathematical induction.

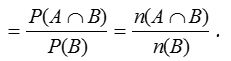

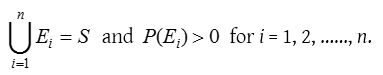

Conditional probability

Let A and B be two events associated with a random experiment. Then, the probability of occurrence of A under the condition that B has already occurred and P(B) ≠ 0, is called the conditional probability and it is denoted by P(A/B).

Thus, P(A/B) = Probability of occurrence of A, given that B has already happened.

Similarly, P(B/A) = Probability of occurrence of B, given that A has already happened.

Sometimes, P(A/B) is also used to denote the probability of occurrence of A when B occurs. Similarly, P(B/A) is used to denote the probability of occurrence of B when A occurs.

Multiplication Theorem Of Probability

- If A and B are two events associated with a random experiment, then P(A∩B) = P(A).P(B/A), if P(A) ≠ 0 or P(A∩B) = P(B).P(A/B), if P(B) ≠ 0.

- Extension of multiplication theorem:

If A1, A2 ……… An are n events related to a random experiment, then

P(A1∩A2∩A3∩ … ∩An) = P(A1) P(A2/A1) P(A3/A1∩A2)……P(An/A1∩A2∩…∩An−1),

where P(Ai/A1∩A2∩…∩Ai−1), represents the conditional probability of the event , given that the events A1, A2 ……… Ai−1 have already happened. - Multiplication theorems for independent events:

If A and B are independent events associated with a random experiment, then P(A∩B) = P(A).P(B) i.e., the probability of simultaneous occurrence of two independent events is equal to the product of their probabilities. By multiplication theorem, we have P(A∩B) = P(A).P(B/A). Since A and B are independent events, therefore P(B/A) = P(B). Hence, P(A∩B) = P(A).P(B). - Extension of multiplication theorem for independent events:

If A1, A2 ……… An are independent events associated with a random experiment, then

P(A1∩A2∩A3∩ … ∩An) = P(A1) P(A2)..… P(An).

By multiplication theorem, we have

P(A1∩A2∩A3∩ … ∩An) = P(A1) P(A2/A1) P(A3/A1∩A2)……P(An/A1∩A2∩…∩An−1)

Since A1, A2 ………An-1, An are independent events, therefore

P(A2/A1) = P(A2), P(A3/A1∩A2) = P(A3),……, P(An/A1∩A2∩…∩An−1) = P(An)

Hence, P(A1∩A2∩A3∩ … ∩An) = P(A1) P(A2)..… P(An).

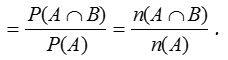

Probability of at least one of the n independent events:

If p1, p2 ……… pn be the probabilities of happening of n independent events A1, A2 ……… An respectively, then

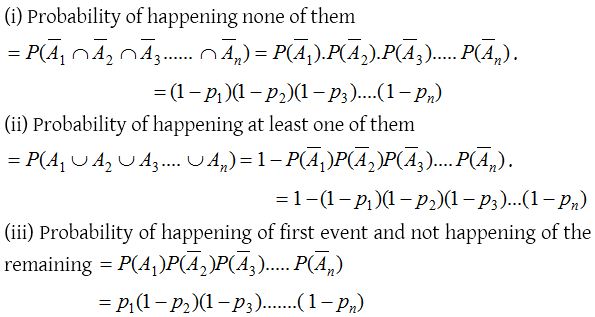

Total probability and Baye’s rule

(1) The law of total probability:

Let S be the sample space and let E1, E2 ……… En be n mutually exclusive and exhaustive events associated with a random experiment. If A is any event which occurs with E1 or E2 or …or En, then

P(A) = P(E1) P(A/E1) + P(E2) P(A/E2) + ….. P(En)P(A/En).

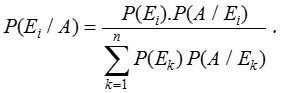

(2) Baye’s rule:

Let S be a sample space and E1, E2 ……… En be n mutually exclusive events such that

We can think of Ei’s as the causes that lead to the outcome of an experiment. The probabilities P(Ei), i = 1, 2, ….., n are called prior probabilities. Suppose the experiment results in an outcome of event A, where P(A) > 0. We have to find the probability that the observed event A was due to cause Ei, that is, we seek the conditional probability P(Ei/A). These probabilities are called posterior probabilities, given by Baye’s rule as